Today's graphic is motivated by recent posts by Paul Krugman on implications of capital-biased technological change. In both posts Krugman uses the share of employee compensation (COE) to nominal GDP as his measure of labor's share of income. Although the data for both series go back to 1947, Krugman chooses to drop the data prior to 1973 arguing that 1973 marked the end of the post-WWII economic boom. Put another way, Krugman is saying that there is a structural break in the data generating process for labor's share which makes data prior to 1973 useless (or perhaps actively misleading) if one is interested in thinking about future trends in labor share.

If you are wondering what a plot of the entire time series looks like here is the ratio of COE / GDP from 1947 forward.

It looks like the employee compensation ratio is roughly the same today as it was in 1950 (although obviously heading in different directions!).

In his first post Krugman argues that this measure "fluctuates over the business cycle." Note that the vertical scale ranges only from 0.52 to 0.60. Such a small range will exacerbate fluctuations in the series. Plotting the same data on its natural scale (i.e., 0 to 1), yields the following.

Based on this plot, the measure appears to have been remarkably constant over the past 60 odd years.

Which of these plots gives the more "correct" view of the data? Or does it depend on the point you are trying to make?

As always, code is available.

Blog Topics...

3D plotting

(1)

Academic Life

(2)

ACE

(18)

Adaptive Behavior

(2)

Agglomeration

(1)

Aggregation Problems

(1)

Asset Pricing

(1)

Asymmetric Information

(2)

Behavioral Economics

(1)

Breakfast

(4)

Business Cycles

(8)

Business Theory

(4)

China

(1)

Cities

(2)

Clustering

(1)

Collective Intelligence

(1)

Community Structure

(1)

Complex Systems

(42)

Computational Complexity

(1)

Consumption

(1)

Contracting

(1)

Credit constraints

(1)

Credit Cycles

(6)

Daydreaming

(2)

Decision Making

(1)

Deflation

(1)

Diffusion

(2)

Disequilibrium Dynamics

(6)

DSGE

(3)

Dynamic Programming

(6)

Dynamical Systems

(9)

Econometrics

(2)

Economic Growth

(5)

Economic Policy

(5)

Economic Theory

(1)

Education

(4)

Emacs

(1)

Ergodic Theory

(6)

Euro Zone

(1)

Evolutionary Biology

(1)

EVT

(1)

Externalities

(1)

Finance

(29)

Fitness

(6)

Game Theory

(3)

General Equilibrium

(8)

Geopolitics

(1)

GitHub

(1)

Graph of the Day

(11)

Greatest Hits

(1)

Healthcare Economics

(1)

Heterogenous Agent Models

(2)

Heteroskedasticity

(1)

HFT

(1)

Housing Market

(2)

Income Inequality

(2)

Inflation

(2)

Institutions

(2)

Interesting reading material

(2)

IPython

(1)

IS-LM

(1)

Jerusalem

(7)

Keynes

(1)

Kronecker Graphs

(3)

Krussel-Smith

(1)

Labor Economics

(1)

Leverage

(2)

Liquidity

(11)

Logistics

(6)

Lucas Critique

(2)

Machine Learning

(2)

Macroeconomics

(45)

Macroprudential Regulation

(1)

Mathematics

(23)

matplotlib

(10)

Mayavi

(1)

Micro-foundations

(10)

Microeconomic of Banking

(1)

Modeling

(8)

Monetary Policy

(4)

Mountaineering

(9)

MSD

(1)

My Daily Show

(3)

NASA

(1)

Networks

(46)

Non-parametric Estimation

(5)

NumPy

(2)

Old Jaffa

(9)

Online Gaming

(1)

Optimal Growth

(1)

Oxford

(4)

Pakistan

(1)

Pandas

(8)

Penn World Tables

(1)

Physics

(2)

Pigouvian taxes

(1)

Politics

(6)

Power Laws

(10)

Prediction Markets

(1)

Prices

(3)

Prisoner's Dilemma

(2)

Producer Theory

(2)

Python

(29)

Quant

(4)

Quote of the Day

(21)

Ramsey model

(1)

Rational Expectations

(1)

RBC Models

(2)

Research Agenda

(36)

Santa Fe

(6)

SciPy

(1)

Shakshuka

(1)

Shiller

(1)

Social Dynamics

(1)

St. Andrews

(1)

Statistics

(1)

Stocks

(2)

Sugarscape

(2)

Summer Plans

(2)

Systemic Risk

(13)

Teaching

(16)

Theory of the Firm

(4)

Trade

(4)

Travel

(3)

Unemployment

(9)

Value iteration

(2)

Visualizations

(1)

wbdata

(2)

Web 2.0

(1)

Yale

(1)

Monday, December 31, 2012

Friday, December 28, 2012

Graph of the Day

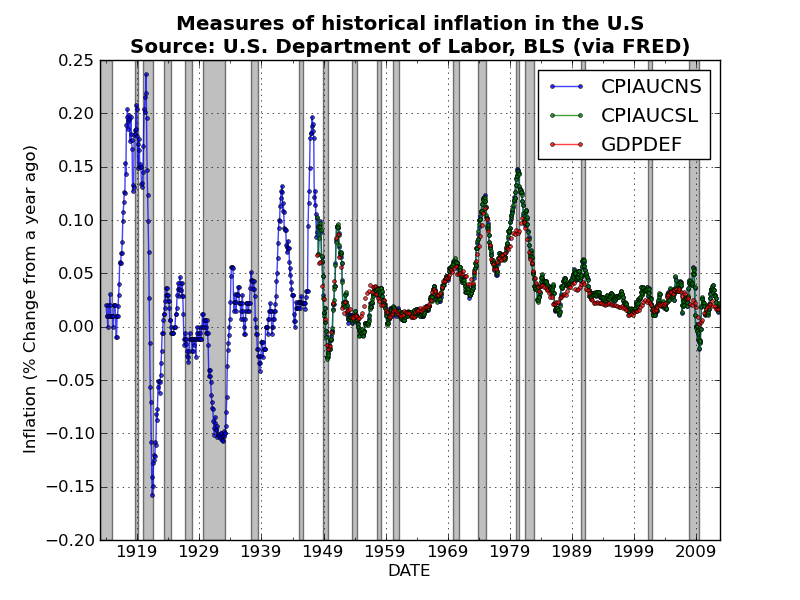

Took a few days off blogging for Christmas and Boxing Day, but am now back at it! Here is a quick plot of historical measures of inflation in the U.S.. I used Pandas to grab the three price indices, and then used a nice built-in Pandas method pct_change(periods)to convert the monthly price indices (i.e., CPIAUCNS and CPIAUCSL) and the quarterly GDP deflator to measures of percentage change in prices from a year ago (which is a standard measure of inflation).

After combining the three series into a single DataFrame object, you can plot all three series with a single line of code!

Unsurprisingly the three measures track one another very closely. Perhaps I should have thrown in some measures of producer prices? Code is available here.

After combining the three series into a single DataFrame object, you can plot all three series with a single line of code!

Unsurprisingly the three measures track one another very closely. Perhaps I should have thrown in some measures of producer prices? Code is available here.

Labels:

Graph of the Day,

Inflation,

Macroeconomics,

matplotlib,

Pandas,

Python

Monday, December 24, 2012

Graph(s) of the Day!

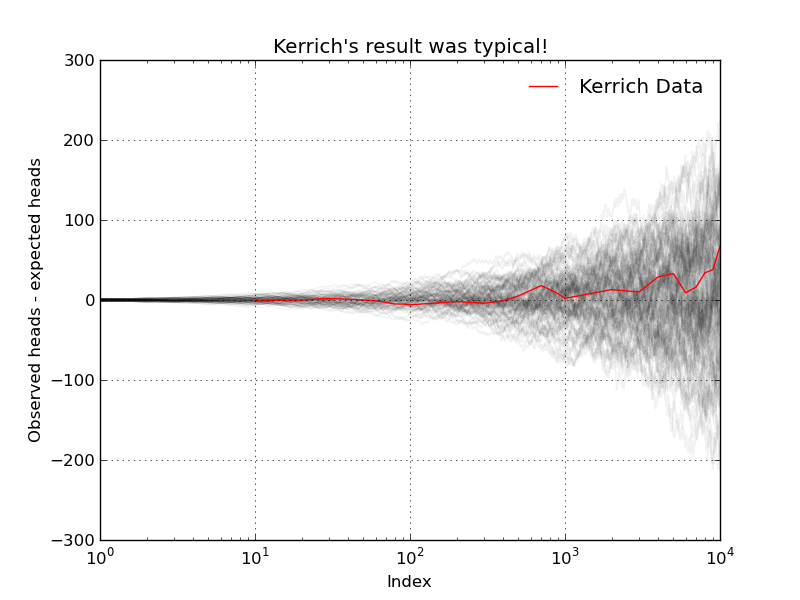

Today's graphic(s) attempt to dispel a common misunderstanding of basic probability theory. We all know that flipping a fair coin will result in heads exactly 50% of the time. Given this, many people seem to think that the Law of Large Numbers (LLN) tells us that the observed number of heads should more or less equal the expected number of heads. This intuition is wrong!

A South African mathematician named John Kerrich was visiting Copenhagen in 1940 when Germany invaded Denmark. Kerrich spent the next five years in an interment camp where, to pass the time, he carried out a series of experiments in probability theory...including an experiment where he flipped a coin by hand 10,000 times! He apparently also used ping-pong balls to demonstrate Bayes theorem.

After the war Kerrich was released and published the results of many of his experiments. I have copied the table of the coin flipping results reported by Kerrich below (and included a csv file on GitHub). The first two collumns are self explanatory, the third column, Difference, is the difference between the observed number of heads and the expected number of heads.

Below I plot the data in the third column: the difference between the observed number of heads and the expected number of heads is diverging (which is the exact opposite of most peoples' intuition)!

Perhaps Kerrich made a mistake (he didn't), but we can check his results via simulation! First, a single replication of T = 10,000 flips of a fair coin...

Again, we observe divergence (but this time in the opposite direction!). For good measure, I ran N=100 replications of the same experiment (i.e., flipping a coin T=10,000 times). The result is the following nice graphic...

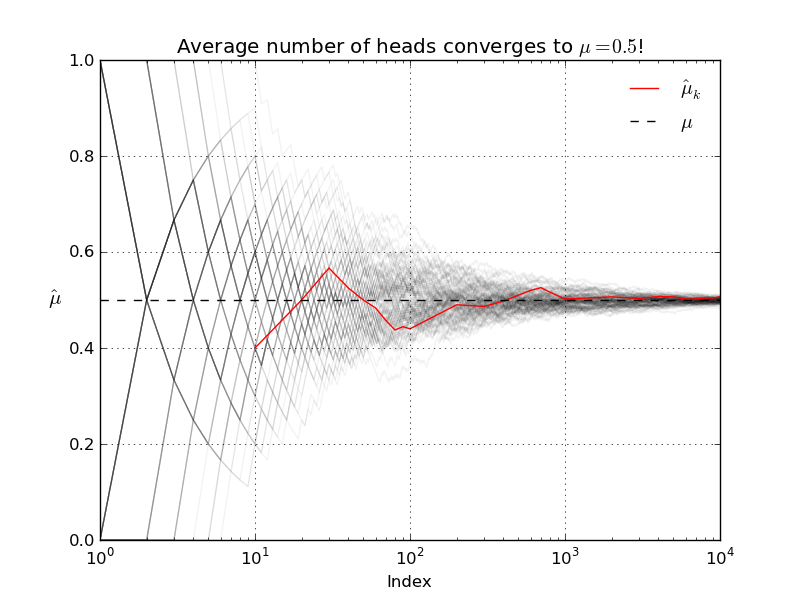

Our simulations suggest that Kerrich's result was indeed typical. The LLN does not say that as T increases the observed number of heads will be close to the expected number of heads! What the LLN says instead is that, as T increases, the average number of heads will get closer and closer to the true population average (which in this case, with our fair coin, is 0.5).

A South African mathematician named John Kerrich was visiting Copenhagen in 1940 when Germany invaded Denmark. Kerrich spent the next five years in an interment camp where, to pass the time, he carried out a series of experiments in probability theory...including an experiment where he flipped a coin by hand 10,000 times! He apparently also used ping-pong balls to demonstrate Bayes theorem.

After the war Kerrich was released and published the results of many of his experiments. I have copied the table of the coin flipping results reported by Kerrich below (and included a csv file on GitHub). The first two collumns are self explanatory, the third column, Difference, is the difference between the observed number of heads and the expected number of heads.

| Tosses | Heads | Difference |

| 10 | 4 | -1 |

| 20 | 10 | 0 |

| 30 | 17 | 2 |

| 40 | 21 | 1 |

| 50 | 25 | 0 |

| 60 | 29 | -1 |

| 70 | 32 | -3 |

| 80 | 35 | -5 |

| 90 | 40 | -5 |

| 100 | 44 | -6 |

| 200 | 98 | -2 |

| 300 | 146 | -4 |

| 400 | 199 | -1 |

| 500 | 255 | 5 |

| 600 | 312 | 12 |

| 700 | 368 | 18 |

| 800 | 413 | 13 |

| 900 | 458 | 8 |

| 1000 | 502 | 2 |

| 2000 | 1013 | 13 |

| 3000 | 1510 | 10 |

| 4000 | 2029 | 29 |

| 5000 | 2533 | 33 |

| 6000 | 3009 | 9 |

| 7000 | 3516 | 16 |

| 8000 | 4034 | 34 |

| 9000 | 4538 | 38 |

| 10000 | 5067 | 67 |

Perhaps Kerrich made a mistake (he didn't), but we can check his results via simulation! First, a single replication of T = 10,000 flips of a fair coin...

Again, we observe divergence (but this time in the opposite direction!). For good measure, I ran N=100 replications of the same experiment (i.e., flipping a coin T=10,000 times). The result is the following nice graphic...

Our simulations suggest that Kerrich's result was indeed typical. The LLN does not say that as T increases the observed number of heads will be close to the expected number of heads! What the LLN says instead is that, as T increases, the average number of heads will get closer and closer to the true population average (which in this case, with our fair coin, is 0.5).

Let's run another simulation to verify that the LLN actually holds. In the experiment I conduct N=100 runs of T=10,000 coin flips. For each of the runs I re-compute the sample average after each successive flip.

As always code and data are available! Enjoy.

Labels:

Graph of the Day,

matplotlib,

NumPy,

Pandas,

SciPy,

Statistics

Sunday, December 23, 2012

Graph of the Day

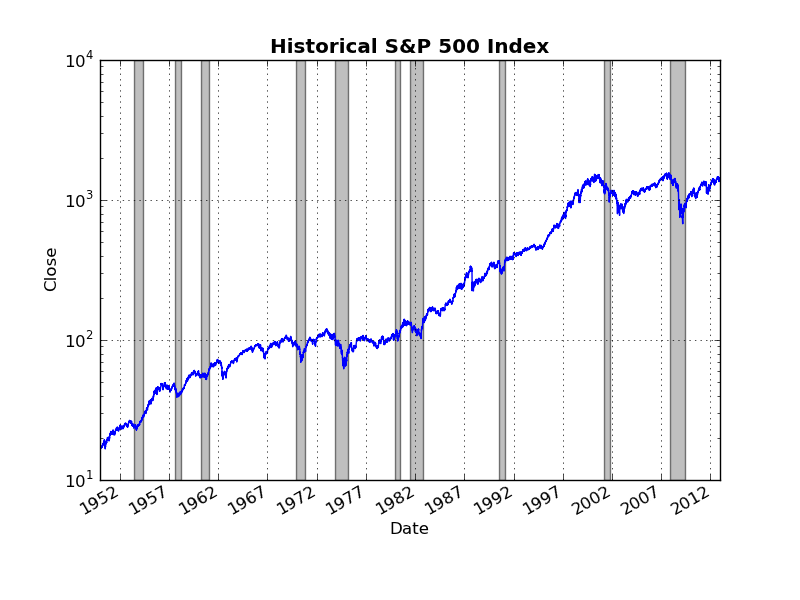

Earlier this week I used Pandas to grab some historical data on the S&P 500 from Yahoo!Finance and generate a simple time series plot. Today, I am going to re-examine this data set in order to show the importance of scaling and adjusting for inflation when plotting economic data.

I again use the functions from the pandas.io.data to grab the data. Specifically, I use get_data_yahoo('^GSPC') to get the S&P 500 time series, and get_data_fred('CPIAUCSL')to grab the consumer price index (CPI). Here is a naive plot of historical S&P 500 returns from 1950 through 2012 (as usual, includes grey NBER recession bands).

Note that, because the CPI data are monthly frequency, I resample the daily S&P 500 data by taking monthly averages. This plot might make you conclude that there was a massive structural break/regime change around the year 2000 in whatever underlying process is generating the S&P 500. However, as the level of the S&P 500 increases, the linear scale on the vertical axis makes changes from month to month seem more dramatic. To control for this, I simply make the vertical scale logarithmic (now equal distances on the vertical axis represent equal percentage changes in the S&P 500).

Now the "obvious" structural break in the year 2000 no longer seems so obvious. Indeed there was a period of roughly 10-15 years during the late 1960's through 1970's during which the S&P 500 basically moved sideways in a similar manner to what we have experienced during the last 10+ years.

This brings us to another, more significant, problem with these graphs: neither or them adjusts for inflation! When plotting long economic time series it is always a good idea to adjust for inflation. The 1970's was a period of fairly high inflation in the U.S., thus the fact that the nominal value of the S&P 500 didn't change all that much over this period tells us that, in real terms, the value of the S&P 500 fell considerably.

Using the CPI data from FRED, it is straight-forward to convert the nominal value of the S&P 500 index to a real value for some base month/year. Below is a plot of the real S&P 500 (in Nov. 2012 Dollars), with a logarithmic scale on the vertical axis. As expected, the real S&P 500 declined significantly during the 1970's.

Code is available on GitHub. Enjoy!

I again use the functions from the pandas.io.data to grab the data. Specifically, I use get_data_yahoo('^GSPC') to get the S&P 500 time series, and get_data_fred('CPIAUCSL')to grab the consumer price index (CPI). Here is a naive plot of historical S&P 500 returns from 1950 through 2012 (as usual, includes grey NBER recession bands).

Note that, because the CPI data are monthly frequency, I resample the daily S&P 500 data by taking monthly averages. This plot might make you conclude that there was a massive structural break/regime change around the year 2000 in whatever underlying process is generating the S&P 500. However, as the level of the S&P 500 increases, the linear scale on the vertical axis makes changes from month to month seem more dramatic. To control for this, I simply make the vertical scale logarithmic (now equal distances on the vertical axis represent equal percentage changes in the S&P 500).

Now the "obvious" structural break in the year 2000 no longer seems so obvious. Indeed there was a period of roughly 10-15 years during the late 1960's through 1970's during which the S&P 500 basically moved sideways in a similar manner to what we have experienced during the last 10+ years.

This brings us to another, more significant, problem with these graphs: neither or them adjusts for inflation! When plotting long economic time series it is always a good idea to adjust for inflation. The 1970's was a period of fairly high inflation in the U.S., thus the fact that the nominal value of the S&P 500 didn't change all that much over this period tells us that, in real terms, the value of the S&P 500 fell considerably.

Using the CPI data from FRED, it is straight-forward to convert the nominal value of the S&P 500 index to a real value for some base month/year. Below is a plot of the real S&P 500 (in Nov. 2012 Dollars), with a logarithmic scale on the vertical axis. As expected, the real S&P 500 declined significantly during the 1970's.

Code is available on GitHub. Enjoy!

Saturday, December 22, 2012

Graph(s) of the Day

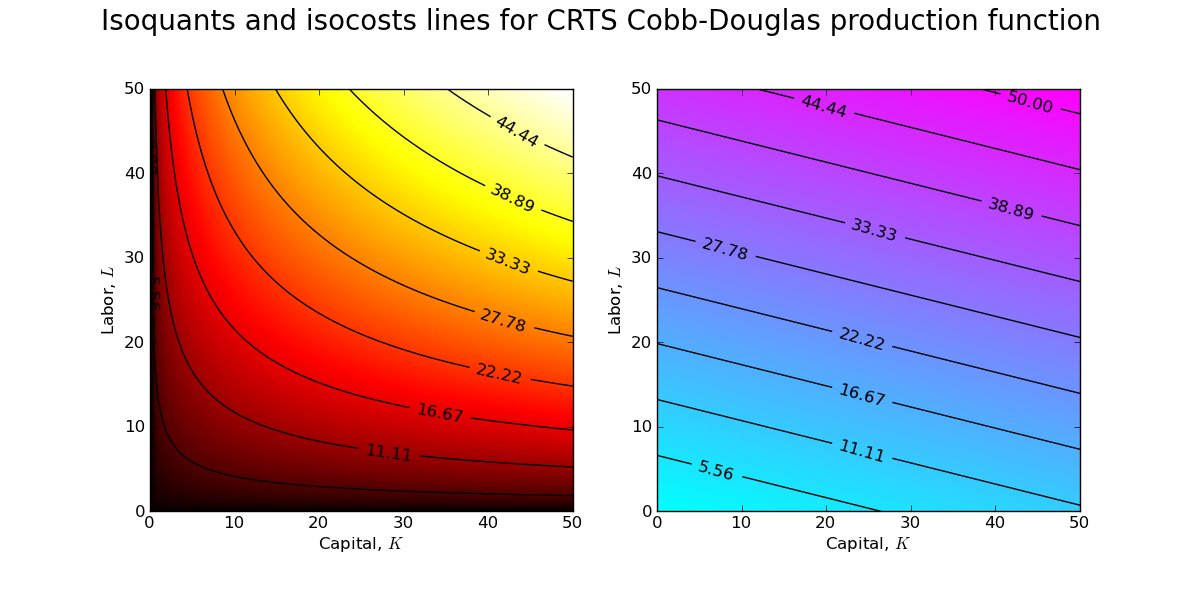

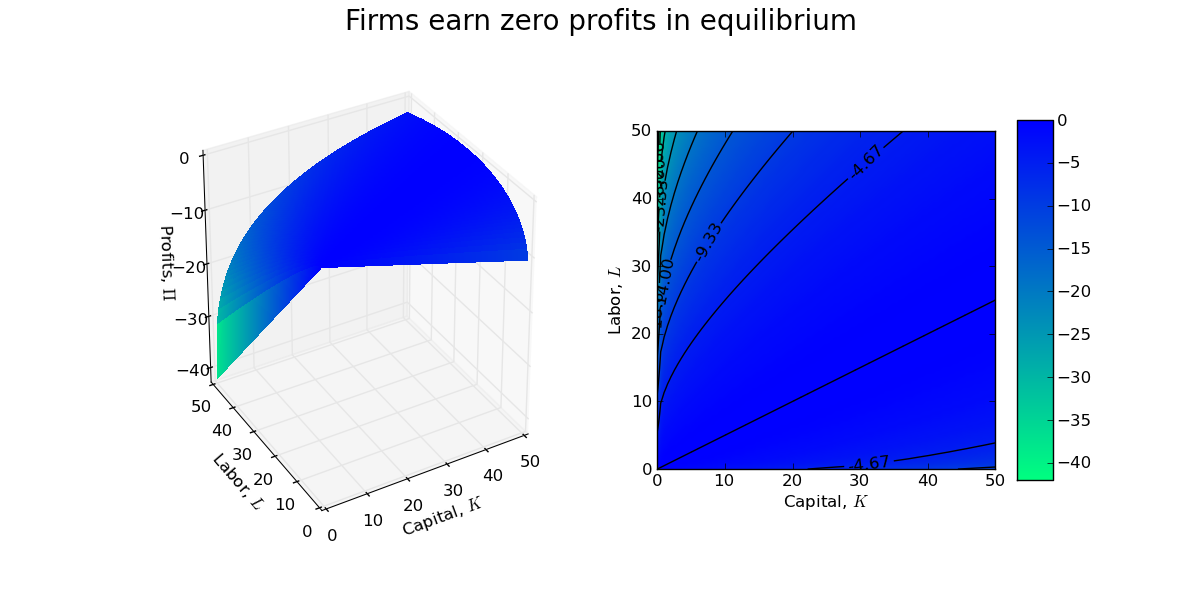

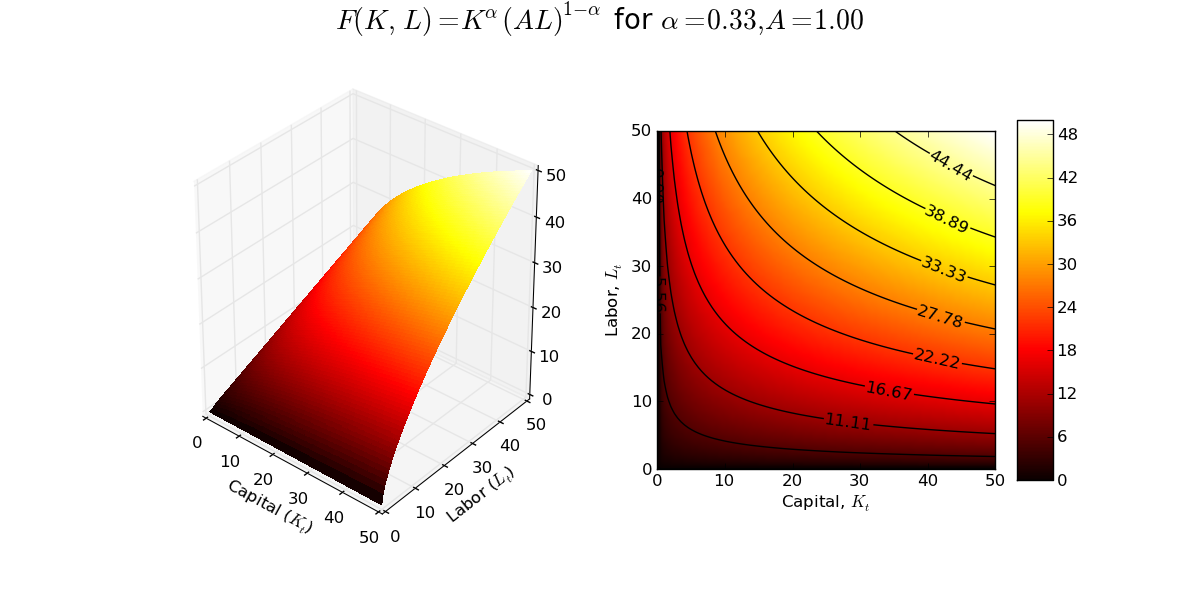

Suppose large number of identical firms in a perfectly competitive industry with constant returns to scale (CRTS) Cobb-Douglas production functions: \[Y = F(K, L) = K^{\alpha}(AL)^{1 - \alpha}\] Output, Y, is a homogenous of degree one function of capital, K, labor, L, and technology, A, is labor augmenting.

Typically, we economists model firms as choosing demands for capital and labor in order to maximize profits while taking prices as given (i.e., unaffected by the decisions of the individual firm):\[\max_{K,L} \Pi = K^{\alpha}(AL)^{1 - \alpha} - (wL + rK)\] where the prices are $1, w, r$. Note that I am following convention in assuming that the price of the output good is the numeraire (i.e., normalized to 1) and thus the real wage, $w$, and the return to capital, $r$, are both relative prices expressed in terms of units of the output good.

The first order conditions (FOCs) of a typical firms maximization problem are \[\begin{align}\frac{\partial \Pi}{\partial K}=&0 \implies r = \alpha K^{\alpha-1}(AL)^{1 - \alpha} \label{MPK}\\

\frac{\partial \Pi}{\partial L}=&0 \implies w = (1 - \alpha) K^{\alpha}(AL)^{-\alpha}A \label{MPL}\end{align}\] Dividing $\ref{MPK}$ by $\ref{MPL}$ (and a bit of algebra) yields the following equation for the optimal capital/labor ratio: \[\frac{K}{L} = \left(\frac{\alpha}{1 - \alpha}\right)\left(\frac{w}{r}\right)\] The fact that, for a given set of prices $w$, $r$, the optimal choices of $K$ and $L$ are indeterminate (any ratio of $K$ and $L$ satisfying the above condition will do) implies that the optimal scale of the firm is also indeterminate.

How can I create a graphic that clearly demonstrates this property of the CRTS production function? I can start by fixing values for the wage and return to capital and then creating contour plots of the production frontier and the cost surface.

The above contour plots are drawn for $w\approx0.84$ and $r\approx0.21$ (which implies an optimal capital/labor ratio of 2:1). You should recognize the contour plot for the production surface (left) from a previous post. The contour plot of the cost surface (right) is a simple plane (which is why the isocost lines are lines and not curves!). Combining the contour plots allows one to see the set of tangency points between isoquants and isocosts.

A firm manager is indifferent between each of these points of tangency, and thus the size/scale of the firm is indeterminate. Indeed, with CRTS a firm will earn zero profits at each of the tangency points in the above contour plot.

As usual, the code is available on GitHub.

Update: Installing MathJax on my blog to render mathematical equations was easy (just a quick cut and paste job).

Typically, we economists model firms as choosing demands for capital and labor in order to maximize profits while taking prices as given (i.e., unaffected by the decisions of the individual firm):\[\max_{K,L} \Pi = K^{\alpha}(AL)^{1 - \alpha} - (wL + rK)\] where the prices are $1, w, r$. Note that I am following convention in assuming that the price of the output good is the numeraire (i.e., normalized to 1) and thus the real wage, $w$, and the return to capital, $r$, are both relative prices expressed in terms of units of the output good.

The first order conditions (FOCs) of a typical firms maximization problem are \[\begin{align}\frac{\partial \Pi}{\partial K}=&0 \implies r = \alpha K^{\alpha-1}(AL)^{1 - \alpha} \label{MPK}\\

\frac{\partial \Pi}{\partial L}=&0 \implies w = (1 - \alpha) K^{\alpha}(AL)^{-\alpha}A \label{MPL}\end{align}\] Dividing $\ref{MPK}$ by $\ref{MPL}$ (and a bit of algebra) yields the following equation for the optimal capital/labor ratio: \[\frac{K}{L} = \left(\frac{\alpha}{1 - \alpha}\right)\left(\frac{w}{r}\right)\] The fact that, for a given set of prices $w$, $r$, the optimal choices of $K$ and $L$ are indeterminate (any ratio of $K$ and $L$ satisfying the above condition will do) implies that the optimal scale of the firm is also indeterminate.

How can I create a graphic that clearly demonstrates this property of the CRTS production function? I can start by fixing values for the wage and return to capital and then creating contour plots of the production frontier and the cost surface.

The above contour plots are drawn for $w\approx0.84$ and $r\approx0.21$ (which implies an optimal capital/labor ratio of 2:1). You should recognize the contour plot for the production surface (left) from a previous post. The contour plot of the cost surface (right) is a simple plane (which is why the isocost lines are lines and not curves!). Combining the contour plots allows one to see the set of tangency points between isoquants and isocosts.

A firm manager is indifferent between each of these points of tangency, and thus the size/scale of the firm is indeterminate. Indeed, with CRTS a firm will earn zero profits at each of the tangency points in the above contour plot.

As usual, the code is available on GitHub.

Update: Installing MathJax on my blog to render mathematical equations was easy (just a quick cut and paste job).

Friday, December 21, 2012

Gun control...

Via Mark Thoma, Steve Williamson has an excellent post about the economics of gun control:

What's the problem here? People buy guns for three reasons: (i) they want to shoot animals with them; (ii) they want to shoot people with them; (iii) they want to threaten people with them. There are externalities. Gun manufacturers and retailers profit from the sale of guns. The people who buy the guns and use them seem to enjoy having them. But there are third parties who suffer. People shooting at animals can hit people. People who buy guns intending to protect themselves may shoot people who in fact intend no harm. People may temporarily feel compelled to harm others, and want an efficient instrument to do it with.

There are also information problems. It may be difficult to determine who is a hunter, who is temporarily not in their right mind, and who wants to put a loaded weapon in the bedside table.

What do economists know? We know something about information problems, and we know something about mitigating externalities. Let's think first about the information problems. Here, we know that we can make some headway by regulating the market so that it becomes segmented, with these different types of people self-selecting. This one is pretty obvious, and is a standard part of the conversation. Guns for hunting do not need to be automatic or semi-automatic, they do not need to have large magazines, and they do not have to be small. If hunting weapons do not have these properties, who would want to buy them for other purposes?

On the externality problem, we can be more inventive. A standard tool for dealing with externalities is the Pigouvian tax. Tax the source of the bad externality, and you get less of it. How big should the tax be? An unusual problem here is that the size of the externality is random - every gun is not going to injure or kill someone. There's also an inherent moral hazard problem, in that the size of the externality depends on the care taken by the gunowner. Did he or she properly train himself or herself? Did they store their weapon to decrease the chance of an accident?

What's the value of a life? I think when economists ask that question, lay people are offended. I'm thinking about it now, and I'm offended too. If someone offered me \$5 million for my cat, let alone another human being, I wouldn't take it.

In any case, the Pigouvian tax we would need to correct the externality should be a large one, and it could generate a lot of revenue. If there are 300 million guns in the United States, and we impose a tax of \$3600 per gun on the current stock, we would eliminate the federal government deficit. But \$3600 is coming nowhere close to the potential damage that a single weapon could cause. A potential solution would be to have a gun-purchaser post collateral - several million dollars in assets - that could be confiscated in the event that the gun resulted in injury or loss of life. This has the added benefit of mitigating the moral hazard problem - the collateral is lost whether the damage is "accidental" or caused by, for example, someone who steals the gun.

Of course, once we start thinking about the size of the tax (or collateral) needed to correct the inefficiency that exists here, we'll probably come to the conclusion that it is more efficient just to ban particular weapons and ammunition at the point of manufacture. I think our legislators should take that as far as it goes.

Graph of the Day

Today's graphic demonstrates the use of Pandas to grab data from Yahoo!Finance. The code I wrote uses pandas.io.get_data_yahoo() to grab historical daily data on the S&P 500 index and then generates a simple time series plot. I went ahead and added the NBER recession bars for good measure. Note the use of a logarithmic scale on the vertical axis. Enjoy!

Thursday, December 20, 2012

Graph of the Day

Today's graph is a combined 3D plot of the production frontier associated with the constant returns to scale Cobb-Douglas production function and a contour plot showing the isoquants of the production frontier. This static snapshot was written up using matplotlib (the code also includes an interactive version of the 3D production frontier implemented in Mayavi).

At some point I will figure out how to embed the interactive Mayavi plot into a blog post so that readers can manipulate the plot and change parameter values. If anyone knows how to do this already, a pointer would be much appreciated!

At some point I will figure out how to embed the interactive Mayavi plot into a blog post so that readers can manipulate the plot and change parameter values. If anyone knows how to do this already, a pointer would be much appreciated!

Wednesday, December 19, 2012

Blogging to resume again!

It has been far too long since my last post. Life (becoming a father), travel (summer research trip to SFI), teaching (am teaching a course on Computational Economics), and research (also trying to finish my PhD!) have a way of getting in the way of my blogging. As a mechanism to slowly move back into the blog world, I have decided to start a 'Graphic of the Day' series. Each day I will create a new economic graphic using my favorite Python libraries (mostly Pandas, matplotlib, NumPy/Scipy).

The inaugural 'Graph of the Day' is Figure 1-1 from Mankiw's intermediate undergraduate textbook Macroeconomics.

Real GDP measures the total income of everyone in the economy, and real GDP per person measures the income of the average person in the economy. The figure shows that real GDP per person tends to grow over time and that this normal growth is sometimes interrupted by period of declining income (i.e., the grey NBER bars!), called recessions or depressions.

Note that Real GDP per person is plotted on a logarithmic scale. On such a scale equal distances on the vertical axis represent equal percentage changes. This is why the distance between \$8,000 and \$16,000 (a 100% increase) is the same as the distance between \$32,000 and \$64,000 (also a 100% increase).

The Python code is available on GitHub for download (I used pandas.io.data.get_data_fred() to grab the data). The graphic is a bit boring. I was a bit depressed to find that the longest time series for U.S. per capita real GDP only goes back to 1960! This seems a bit scandalous...but perhaps I was just using the wrong data tags!

The inaugural 'Graph of the Day' is Figure 1-1 from Mankiw's intermediate undergraduate textbook Macroeconomics.

Real GDP measures the total income of everyone in the economy, and real GDP per person measures the income of the average person in the economy. The figure shows that real GDP per person tends to grow over time and that this normal growth is sometimes interrupted by period of declining income (i.e., the grey NBER bars!), called recessions or depressions.

Note that Real GDP per person is plotted on a logarithmic scale. On such a scale equal distances on the vertical axis represent equal percentage changes. This is why the distance between \$8,000 and \$16,000 (a 100% increase) is the same as the distance between \$32,000 and \$64,000 (also a 100% increase).

The Python code is available on GitHub for download (I used pandas.io.data.get_data_fred() to grab the data). The graphic is a bit boring. I was a bit depressed to find that the longest time series for U.S. per capita real GDP only goes back to 1960! This seems a bit scandalous...but perhaps I was just using the wrong data tags!

Thursday, June 7, 2012

5-6 June 2012 at the 2012 CSSS...

For the past two days the focus has been on tools for analyzing non-linear dynamical (and specifically chaotic) systems. We have been using a program called TISEAN to do most of the analysis. There also seem to be a number of R packages for doing non-linear times series analysis: RTisean, tsDyn, tseriesChaos, etc.

For A=0.8 and B=0, the attractor is a simple 2-cycle which means that a plot of the trajectory of the map in state space will yield two points:

However, for A=1.4 and B=0.3, the Henon map displays chaotic dynamics:

Power Spectrum: A good place to start the analysis of times series data is to examine the power spectrum (or spectral density) of the data. For the Henon map with A=0.8 and B=0, the attractor is a 2-cycle which implies that the dominant frequency should be 1/2.

Note the above plot has a single "spike" at a frequency of 0.5. What other frequencies are present in the times series generated by the Henon map? Given that the map is a 2-cycle, in theory, there should be only a single frequency present in the data. Although my computer can represent much smaller numbers, the smallest number that my computer can distinguish as being distinct (i.e., my machine ε) is 2.2204460492503131e-16. The other "spikes" in the above plot are thus non-sensical results of my computer doing calculations with numbers that are too small for it to handle properly. This is a good example of arithmetic underflow.

Given that the Henon map with A=1.4 and B=0.3 exhibits chaotic dynamics we expect that the power spectrum should exhibit spikes at all frequencies.

...or in 3D if you prefer:

The power spectrum for the unique fixed point attractor looks as follows:

One gets much more interesting dynamics out of the Lorenz system simply by changing R. For parameter values R=45, S=16, B=4 the system exhibits chaos:

For R=45, the power spectrum exhibits power at all frequencies:

Henon Map:

Our first task was to simply use TISEAN to generate some trajectories of the Henon map, plot them using our favorite plotting tool (at the moment I am working on improving my Python coding so I am using matplotlib) and then analyze the power spectrum (sometimes called spectral density). TISEAN's version of the Henon map is:

xt+1 = 1 - Axt2 + Byt

yt+1 = xt

For A=0.8 and B=0, the attractor is a simple 2-cycle which means that a plot of the trajectory of the map in state space will yield two points:

However, for A=1.4 and B=0.3, the Henon map displays chaotic dynamics:

Power Spectrum: A good place to start the analysis of times series data is to examine the power spectrum (or spectral density) of the data. For the Henon map with A=0.8 and B=0, the attractor is a 2-cycle which implies that the dominant frequency should be 1/2.

Note the above plot has a single "spike" at a frequency of 0.5. What other frequencies are present in the times series generated by the Henon map? Given that the map is a 2-cycle, in theory, there should be only a single frequency present in the data. Although my computer can represent much smaller numbers, the smallest number that my computer can distinguish as being distinct (i.e., my machine ε) is 2.2204460492503131e-16. The other "spikes" in the above plot are thus non-sensical results of my computer doing calculations with numbers that are too small for it to handle properly. This is a good example of arithmetic underflow.

Given that the Henon map with A=1.4 and B=0.3 exhibits chaotic dynamics we expect that the power spectrum should exhibit spikes at all frequencies.

The Lorenz System:

Next we want to plot some trajectories the Lorenz System and then analyze the resulting power spectrums. Classic model of chaos developed by Edward Lorenz to model weather/climate systems. For parameter values R=15, S=16, B=4 the system exhibits a unique fixed point attractor......or in 3D if you prefer:

The power spectrum for the unique fixed point attractor looks as follows:

One gets much more interesting dynamics out of the Lorenz system simply by changing R. For parameter values R=45, S=16, B=4 the system exhibits chaos:

For R=45, the power spectrum exhibits power at all frequencies:

Python code (and the data files if you do not have TISEAN installed) for replicating the above can be found here.

Monday, June 4, 2012

Monday 4 June 2012 at the 2012 CSSS...

Today's lecture, given by Prof. Liz Bradley, focused on the basics of non-linear (mostly chaotic) dynamics in both discrete and continuous time. Much (all?) of the material in the lecture I had already encountered before in my own reading, but it was nice to get a refresher. What follows is my summary of our discussion of the dynamical properties of that classic example of chaotic dynamics in discrete time, the logistic map. The seminal reference for the logistic map is probably the 1976 Nature paper by Robert May.

The Logistic Map is an innocent looking non-linear equation:

The Logistic Map is an innocent looking non-linear equation:

Xt+1 = r Xt (1 - Xt)

where the state space of the model is the unit interval [0, 1] and r is a parameter that varies on (0, 4].

Some trajectories of the logistic map for various values of r:

If 0 < r ≤ 1, then the dynamics are trivial: the model will converge to X = 0 no matter the initial condition. Suppose the r = 2. In this case, the dynamics of the model are also pretty boring: convergence to a unique fixed point. No matter the initial condition, if r = 2, all trajectories of the logistic map will converge (quite quickly) on a unique steady state value of 1/2.

|

Now suppose that r = 2.919149. In this case, the result is still convergence to a unique fixed point, however the dynamics are more interesting: the trajectories now exhibit dampened oscillations.

For values of r satisfying 3 < r < 3.45 (roughly!), you get a 2-cycle:

For r = 3.8285, one gets the famous 3-cycle which is one of the generally accepted indicators of chaotic dynamics (note I have changed the initial condition from 0.2 to 0.5 to eliminate the transient and thus making the cobweb diagram a bit cleaner):

Finally, for r = 4 we get an example of chaotic dynamics. Note that the deterministic trajectory for the logistic map looks incredibly "random."

3D plot of the phase space for the logistic map:

One of the reasons why models with chaotic dynamics, such as the logistic map, exhibit sensitive dependence to initial conditions, is that such maps repeatedly "stretch and fold" the state space over which they are defined. While 2D phase plots give a sense of how the logistic map "stretches" the state space, a 3D phase plot is a really cool way to see how the logistic map "folds" the state space.

If you are interested, the Python code to replicate the above graphics can be found here. I am still working on the code for the bifurcation diagram, estimating Feigenbaum's constant, and for calculating Lyapunov exponents.

3D plot of the phase space for the logistic map:

One of the reasons why models with chaotic dynamics, such as the logistic map, exhibit sensitive dependence to initial conditions, is that such maps repeatedly "stretch and fold" the state space over which they are defined. While 2D phase plots give a sense of how the logistic map "stretches" the state space, a 3D phase plot is a really cool way to see how the logistic map "folds" the state space.

If you are interested, the Python code to replicate the above graphics can be found here. I am still working on the code for the bifurcation diagram, estimating Feigenbaum's constant, and for calculating Lyapunov exponents.

Friday, June 1, 2012

Santa Fe bound...

Stopping over in Alexandria, VA to visit friends on the way to Santa Fe for the 2012 CSSS. Posting will be more frequent (I hope!) over the next month.

Friday, March 16, 2012

Equity returns: where power-laws go to die? Maybe...

A major criticism of previous empirical work assessing support for the power-law as a model for equity returns is that very few (any?) of the studies assess goodness-of-fit of the power-law model. This post summarizes the results of goodness-of-fit testing for the power-law as a model for equity returns and is a continuation of my previous posts on the power-law as a model for equity returns using data on U.S. equities listed on the Russell 1000 index.

I implement two versions of the KS goodness-of-fit tests suggested in Clauset et al (SIAM, 2009):

Using the parametric version of the KS goodness-of-fit test I am able to reject the power-law model as plausible (i.e., p-value ≤ 0.10) for 17% of positive tails and 12% of negative tails. I would not describe these results as overwhelmingly "anti-power-law," but I would remind readers that the parametric version of the goodness-of-fit test sets a lower-bound on support for the power-law (see my discussion of goodness-of-fit results for mutual funds for more details). Here is a histogram of the p-values for the positive and negative tails of equities in my sample:

The results obtained using the more flexible non-parametric KS test are much less supportive of the power-law model. I reject the power-law model as plausible for roughly 44% of positive tails and 37% of negative tails. Here is another histogram of the goodness-of-fit p-values:

Clearly the non-parametric goodness-of-fit results are more damning for the power-law model than the parametric results. But why?

Discrepancy could be due to sample size effects. The average number of tail observations for a given stock in my sample is 313 (positive tail) and 333 (negative tail): using daily data, I simply do not have that many tail observations to work with. Because of the small sample size I need to rely the extra flexibility of the non-parametric version of the goodness-of-fit to be able to reject the power-law model as plausible. I suspect that this would not be the case if I had access to the TAQ data set used by Gabaix et al (Nature, 2003). It is also worth noting that the more data I get, the more likely I am to reject the power-law as plausible. Specifically, for those equities for which I reject the power-law model as plausible, either for positive or negative tail (or both), I have a larger average number of both total observations and observations in the tail. This sample size dynamic has been something I have observed fairly consistently whilst fitting and testing power-law models to various data sets. The more data I obtain, the less support I find for a power-law and the more support I tend to find for heavy-tailed alternatives (particularly the log-normal).

While I interpret the above results of goodness-of-fit tests as being decidedly against the hypothesis that the power-law model is "universal," clearly the power-law is a plausible model for either the positive or negative (or both) tails of returns for some stocks. In my mind this immediately suggests that there might be meaningful heterogeneity in the tail behavior of asset returns and that an interesting research direction might be to explore economic mechanisms that could generate such diversity of tail behavior.

I end with a significant disclaimer. I am concerned that by ignoring the underlying time dependence (think "clustered volatility" and mean-reversion) in large returns that my goodness-of-fit test results might be biased against the power-law model. Suppose that Gabaix et al (Nature, 2003) are correct about equity returns having power-law tails. Given the dependence structure of returns, it could be the case that the typical KS distance between the best-fit power-law model and the "true" power-law is larger than what I estimate in implementing an iid goodness-of-fit test.

If this is the case, then perhaps the reason I reject the power-law model so often is because my observed KS distance is obtained from fitting a power-law model to dependent data, whereas my bootstrap KS distances are obtained by fitting a power-law to synthetic data that follows a "true" power-law but ignores the underlying dependence structure of returns. In order to address this issue, I will need to develop an alternative goodness-of-fit testing procedure that can mimic the time dependence in the returns data! Fortunately, I have some ideas (good ones I hope!) on how to proceed...

I implement two versions of the KS goodness-of-fit tests suggested in Clauset et al (SIAM, 2009):

- Parametric version: conceptually and computationally simple; assumes estimated xmin is the "true" power-law threshold and simulates data under the null hypothesis of a power-law with exponent equal to estimated α

- Non-parametric version: conceptually less straight-forward and computationally very intensive because it makes use of the fact that xmin is estimated from the data.

Using the parametric version of the KS goodness-of-fit test I am able to reject the power-law model as plausible (i.e., p-value ≤ 0.10) for 17% of positive tails and 12% of negative tails. I would not describe these results as overwhelmingly "anti-power-law," but I would remind readers that the parametric version of the goodness-of-fit test sets a lower-bound on support for the power-law (see my discussion of goodness-of-fit results for mutual funds for more details). Here is a histogram of the p-values for the positive and negative tails of equities in my sample:

The results obtained using the more flexible non-parametric KS test are much less supportive of the power-law model. I reject the power-law model as plausible for roughly 44% of positive tails and 37% of negative tails. Here is another histogram of the goodness-of-fit p-values:

Clearly the non-parametric goodness-of-fit results are more damning for the power-law model than the parametric results. But why?

Discrepancy could be due to sample size effects. The average number of tail observations for a given stock in my sample is 313 (positive tail) and 333 (negative tail): using daily data, I simply do not have that many tail observations to work with. Because of the small sample size I need to rely the extra flexibility of the non-parametric version of the goodness-of-fit to be able to reject the power-law model as plausible. I suspect that this would not be the case if I had access to the TAQ data set used by Gabaix et al (Nature, 2003). It is also worth noting that the more data I get, the more likely I am to reject the power-law as plausible. Specifically, for those equities for which I reject the power-law model as plausible, either for positive or negative tail (or both), I have a larger average number of both total observations and observations in the tail. This sample size dynamic has been something I have observed fairly consistently whilst fitting and testing power-law models to various data sets. The more data I obtain, the less support I find for a power-law and the more support I tend to find for heavy-tailed alternatives (particularly the log-normal).

While I interpret the above results of goodness-of-fit tests as being decidedly against the hypothesis that the power-law model is "universal," clearly the power-law is a plausible model for either the positive or negative (or both) tails of returns for some stocks. In my mind this immediately suggests that there might be meaningful heterogeneity in the tail behavior of asset returns and that an interesting research direction might be to explore economic mechanisms that could generate such diversity of tail behavior.

I end with a significant disclaimer. I am concerned that by ignoring the underlying time dependence (think "clustered volatility" and mean-reversion) in large returns that my goodness-of-fit test results might be biased against the power-law model. Suppose that Gabaix et al (Nature, 2003) are correct about equity returns having power-law tails. Given the dependence structure of returns, it could be the case that the typical KS distance between the best-fit power-law model and the "true" power-law is larger than what I estimate in implementing an iid goodness-of-fit test.

If this is the case, then perhaps the reason I reject the power-law model so often is because my observed KS distance is obtained from fitting a power-law model to dependent data, whereas my bootstrap KS distances are obtained by fitting a power-law to synthetic data that follows a "true" power-law but ignores the underlying dependence structure of returns. In order to address this issue, I will need to develop an alternative goodness-of-fit testing procedure that can mimic the time dependence in the returns data! Fortunately, I have some ideas (good ones I hope!) on how to proceed...

Monday, March 12, 2012

Zipf's Law does not hold for mutual funds!

Gabaix et al (Nature, 2003) and Gabaix et al (QJE, 2006) lay out an economic theory of large fluctuations in share prices based, in part, on the assumption that the size (as measured in dollars of assets under management) of investors in asset markets is well approximated by Zipf's law (i..e., a power-law with scaling exponent ζ ≈ 1 or α ≈ 2). Zipf's law has been purported to hold for cities ( Zipf (Addison-Wesley, 1949), Gabaix (QJE, 1999), Gabaix (AER, 1999), Gabaix and Ioannides (2004), Gabaix (AER, 2011), etc), firms (Okuyama et al (Physica A, 1999), Axtell (Science, 2001), Fujiwara et al (Physica A, 2004)), banks (Aref and Pushkin (2004)), and mutual funds (Gabaix et al (QJE, 2006)). I say purported, because experience has taught me never to believe in a power-law that I haven't estimated myself!

In this post, I am going to provide evidence against the power-law as an appropriate model for mutual-funds using the data from the same source as Gabaix et al (QJE, 2006). The figure below shows two survival plots of the size, as measured in terms of $-value of assets under management, of U.S. mutual funds at the end of 2009 using data from CRSP.1 The top panel shows the entire data set, the second panel shows only upper 20% of mutual funds (roughly those funds with assets under management greater than $1 billion) and is intended to match as closely as possible Figure VII from Gabaix et al (QJE, 2006).

Choosing a threshold to include only the largest 20% of mutual funds for a given year, Gabaix et al (QJE, 2006) report an average estimate for the power-law scaling exponent of ζ ≈ 1 (or α ≈ 2) over the period 1961-1999. Gabaix et al (QJE, 2006) estimate α using OLS on the upper CDF of the mutual fund distribution (although they report similar results using the Hill estimator).

Using my larger data set I estimate, via OLS and choosing the same 20% cut-off criterion (which leaves 1313 observations in the tail), a scaling exponent of ζ = 1.11 (or α ≈ 2.11). Here is a plot showing my OLS estimates:

I estimated the scaling exponent using maximum likelihood in two ways. First, I apply the Hill estimator to the data using the same 20% cut-off as in Gabaix et al (QJE, 2006); second, I re-estimate the scaling exponent using the Hill estimator, while choosing the threshold parameter to minimize the KS distance as in Clauset et al (SIAM, 2009). Method 1 obtains an estimate of α = 1.97(3); while method 2 obtains estimates of α = 2.04(3) and xmin = $1.12 billion (which leaves 1077 observations in the tail). Note that the KS distance, D, for each maximum likelihood fits is smaller than the KS distance obtained using the OLS estimate of α.

Numbers in parentheses show the amount of uncertainty in the final digit (obtained using a parametric bootstrap to estimate the standard error).

Parameter uncertainty is estimated using the bootstrap:

However, what about goodness-of-fit? Good data analysis is a lot like good detective work, and it is important to collect as much evidence as possible, relevant to testing the hypothesis at hand, before passing judgement. As stressed in Clauset et al (SIAM, 2009), an assessment of the goodness-of-fit of the power-law model is an important piece of relevant statistical evidence. Here are my goodness-of-fit test results:

Note that implementing the non-parametric version of the KS goodness-of-fit test basically shifts and "condenses" the sampling distribution of the KS distance (relative to both parametric versions). Taking into account the additional flexibility of the Clauset et al (SIAM, 2009) procedure for fitting the power-law null model reduces both the mean and variance of sampling distribution of the KS distance, D.

Quick test of alternative hypotheses. A very plausible alternative distribution for mutual funds is the log-normal (recall Gibrat's law of proportionate growth would predict log-normal). Can I reject the power-law in favour of the log-normal using likelihood ratio tests? YES!

I think it matters quite a bit for the model put forward in Gabaix et al (QJE, 2006)! In Gabaix et al (QJE, 2006) investors take as given that the distribution of investors' size follows a power-law Specifically, an investor makes use of the distribution of investor size in calculating his optimal trading volume. Gabaix et al (QJE, 2006) relies on the power-law being a "good approximation" to the true distribution of investor size in order to justify investors taking a power-law distribution as given. I have provided evidence that the power-law is not a plausible model, and that a log-normal distribution is a significantly better fit. If the true distribution is not a power-law, then agents in Gabaix et al (QJE, 2006) are effectively solving a mis-specified optimization program and there is no longer any guarantee that the solution to the properly specified optimization program will result in power-law tails for equity and volume (paradoxically, however, this might turn out to be "good" for Gabaix et al (QJE, 2006) in the sense that I have argued in previous posts that the tails of equity returns are not power-law anyway!).

However, whether or not it matters if a distribution is log-normal, power-law, or simply "heavy-tailed" depends on context. In this case a log-normal distribution is consistent with Gibrat's law of proportionate growth. Gibrat's law applied to investor size says that if the growth rate of investors' assets under management is independent of the amount of assets currently under management, then the distribution of investor size will follow a log-normal distribution. One could easily test whether or not the growth rate of mutual funds is independent of size. Maybe someone already has?

1 Gabaix et al (QJE, 2006) use data on mutual fund assets from 4th quarter of 1999, whereas I use the larger and more recent data set from 4th quarter of 2009.

2 Assessing goodness-of-fit using a parametric version of the KS goodness-of-fit test that takes the optimal threshold chosen using the Clauset et al (SIAM, 2009) method as given is both conceptually easier to understand, and computationally simpler to implement. This procedure also sets an effective lower bar for the plausibility of the power-law model: if the power-law model is not plausible using this parametric KS goodness-of-fit test, then it will be even less plausible if I use the more flexible (and more rigorous) non-parametric KS goodness-of-fit test.

In this post, I am going to provide evidence against the power-law as an appropriate model for mutual-funds using the data from the same source as Gabaix et al (QJE, 2006). The figure below shows two survival plots of the size, as measured in terms of $-value of assets under management, of U.S. mutual funds at the end of 2009 using data from CRSP.1 The top panel shows the entire data set, the second panel shows only upper 20% of mutual funds (roughly those funds with assets under management greater than $1 billion) and is intended to match as closely as possible Figure VII from Gabaix et al (QJE, 2006).

Choosing a threshold to include only the largest 20% of mutual funds for a given year, Gabaix et al (QJE, 2006) report an average estimate for the power-law scaling exponent of ζ ≈ 1 (or α ≈ 2) over the period 1961-1999. Gabaix et al (QJE, 2006) estimate α using OLS on the upper CDF of the mutual fund distribution (although they report similar results using the Hill estimator).

Using my larger data set I estimate, via OLS and choosing the same 20% cut-off criterion (which leaves 1313 observations in the tail), a scaling exponent of ζ = 1.11 (or α ≈ 2.11). Here is a plot showing my OLS estimates:

I estimated the scaling exponent using maximum likelihood in two ways. First, I apply the Hill estimator to the data using the same 20% cut-off as in Gabaix et al (QJE, 2006); second, I re-estimate the scaling exponent using the Hill estimator, while choosing the threshold parameter to minimize the KS distance as in Clauset et al (SIAM, 2009). Method 1 obtains an estimate of α = 1.97(3); while method 2 obtains estimates of α = 2.04(3) and xmin = $1.12 billion (which leaves 1077 observations in the tail). Note that the KS distance, D, for each maximum likelihood fits is smaller than the KS distance obtained using the OLS estimate of α.

Numbers in parentheses show the amount of uncertainty in the final digit (obtained using a parametric bootstrap to estimate the standard error).

Parameter uncertainty is estimated using the bootstrap:

- Using 20% cut-off and a parametric bootstrap, I estimate a se for α of 0.026 and a corresponding 95% confidence interval of (1.912, 2.013)

- Choosing xmin via Clauset et al (SIAM, 2009) and using a parametric bootstrap, I estimate a se for α of 0.032 and a corresponding 95% confidence interval of (1.976, 2.098)

- Finally, choosing xmin via Clauset et al (SIAM, 2009) and using a non-parametric bootstrap, I estimate a se for α of 0.059 and a corresponding 95% confidence interval of (1.932, 2.113); se for xmin of $0.530 B and a corresponding 95% confidence interval of ($0.398 B, 1.332 B)

However, what about goodness-of-fit? Good data analysis is a lot like good detective work, and it is important to collect as much evidence as possible, relevant to testing the hypothesis at hand, before passing judgement. As stressed in Clauset et al (SIAM, 2009), an assessment of the goodness-of-fit of the power-law model is an important piece of relevant statistical evidence. Here are my goodness-of-fit test results:

- Using a 20% cut-off as suggested in Gabaix et al (QJE, 2006) along with the parametric version of the KS goodness-of-fit test I obtain a p-value of roughly 0.00 using 2500 repetitions, which suggests that the power-law model is not plausible.

- Choosing xmin via Clauset et al (SIAM, 2009) and using the parametric version of the KS goodness-of-fit test I obtain a p-value of roughly 0.19 using 2500 repetitions, which suggests that the power-law model is plausible.

- Finally, choosing xmin via Clauset et al (SIAM, 2009) and using the non-parametric bootstrap version of the KS goodness-of-fit test I obtain a p-value of roughly 0.02 using 2500 repetitions, which again suggests that the power-law model is not plausible.

Note that implementing the non-parametric version of the KS goodness-of-fit test basically shifts and "condenses" the sampling distribution of the KS distance (relative to both parametric versions). Taking into account the additional flexibility of the Clauset et al (SIAM, 2009) procedure for fitting the power-law null model reduces both the mean and variance of sampling distribution of the KS distance, D.

Quick test of alternative hypotheses. A very plausible alternative distribution for mutual funds is the log-normal (recall Gibrat's law of proportionate growth would predict log-normal). Can I reject the power-law in favour of the log-normal using likelihood ratio tests? YES!

- Using a 20% cut-off as suggested in Gabaix et al (QJE, 2006) the Vuong LR test statistic is -3.63 with a two-sided p-value of roughly 0.00 (which implies that, given the data, I can distinguish between the power-law and log-normal) and a one-sided p-value of roughly 0.00 (implying that I can reject the power-law in favour of the log-normal!

- Choosing xmin via Clauset et al (SIAM, 2009) the Vuong LR test statistic is -2.27 with a two-sided p-value of roughly 0.023 (which implies that, given the data, I can distinguish between the power-law and log-normal) and a one-sided p-value of roughly 0.012 (implying that I can reject the power-law in favour of the log-normal!

I think it matters quite a bit for the model put forward in Gabaix et al (QJE, 2006)! In Gabaix et al (QJE, 2006) investors take as given that the distribution of investors' size follows a power-law Specifically, an investor makes use of the distribution of investor size in calculating his optimal trading volume. Gabaix et al (QJE, 2006) relies on the power-law being a "good approximation" to the true distribution of investor size in order to justify investors taking a power-law distribution as given. I have provided evidence that the power-law is not a plausible model, and that a log-normal distribution is a significantly better fit. If the true distribution is not a power-law, then agents in Gabaix et al (QJE, 2006) are effectively solving a mis-specified optimization program and there is no longer any guarantee that the solution to the properly specified optimization program will result in power-law tails for equity and volume (paradoxically, however, this might turn out to be "good" for Gabaix et al (QJE, 2006) in the sense that I have argued in previous posts that the tails of equity returns are not power-law anyway!).

However, whether or not it matters if a distribution is log-normal, power-law, or simply "heavy-tailed" depends on context. In this case a log-normal distribution is consistent with Gibrat's law of proportionate growth. Gibrat's law applied to investor size says that if the growth rate of investors' assets under management is independent of the amount of assets currently under management, then the distribution of investor size will follow a log-normal distribution. One could easily test whether or not the growth rate of mutual funds is independent of size. Maybe someone already has?

1 Gabaix et al (QJE, 2006) use data on mutual fund assets from 4th quarter of 1999, whereas I use the larger and more recent data set from 4th quarter of 2009.

2 Assessing goodness-of-fit using a parametric version of the KS goodness-of-fit test that takes the optimal threshold chosen using the Clauset et al (SIAM, 2009) method as given is both conceptually easier to understand, and computationally simpler to implement. This procedure also sets an effective lower bar for the plausibility of the power-law model: if the power-law model is not plausible using this parametric KS goodness-of-fit test, then it will be even less plausible if I use the more flexible (and more rigorous) non-parametric KS goodness-of-fit test.

Subscribe to:

Posts (Atom)

.png)

.png)

.png)

.png)

.png)